Add AI badly

- Hallucinated reply to a real customer

- Compliance breach buried in your audit log

- Viral screenshot Monday morning

- Lawyer email Tuesday

Support chatbot · code review assistant · KYC bot · refund agent · document summarizer · incident postmortem writer. Same trap.

There's a third option.

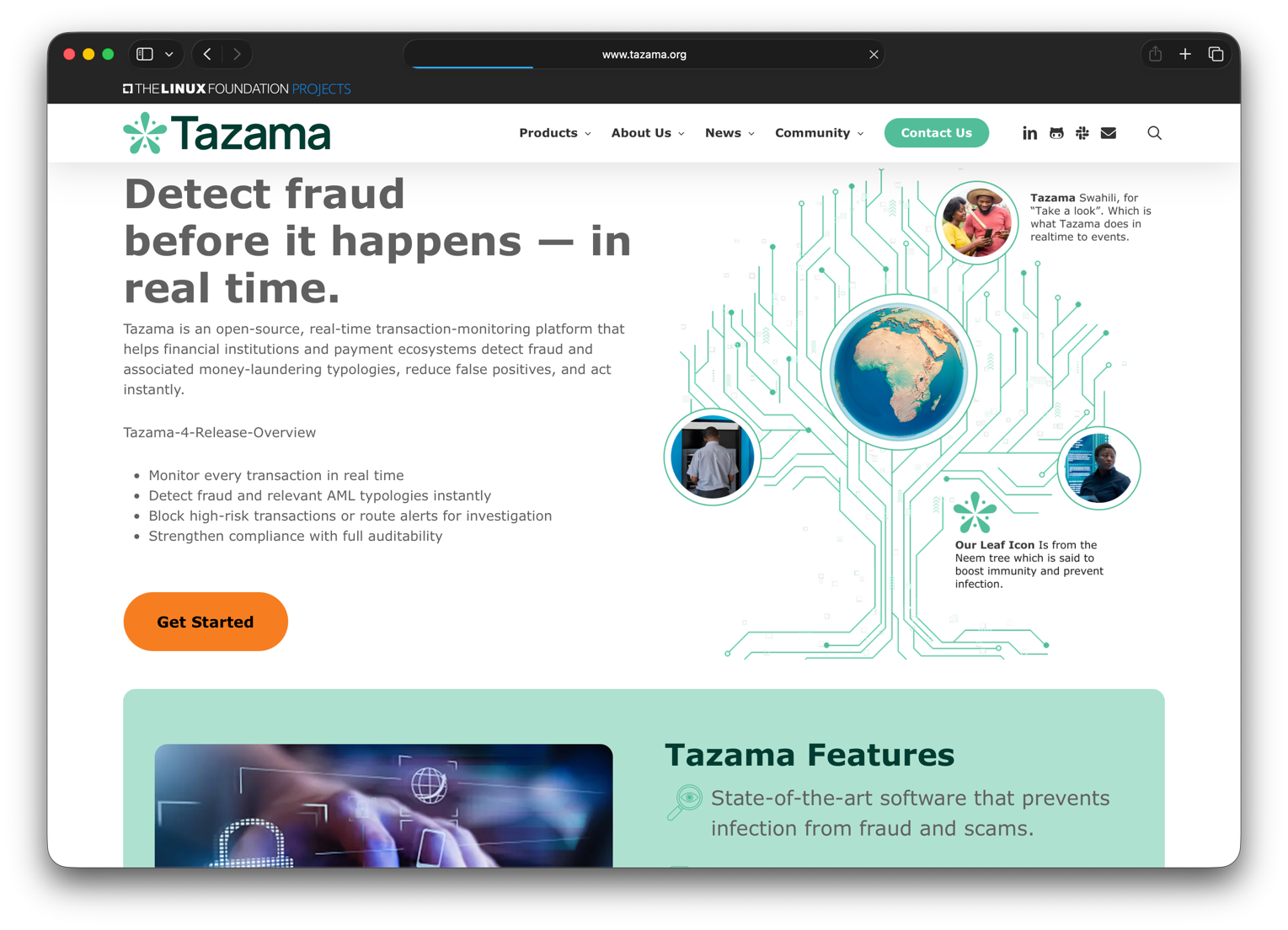

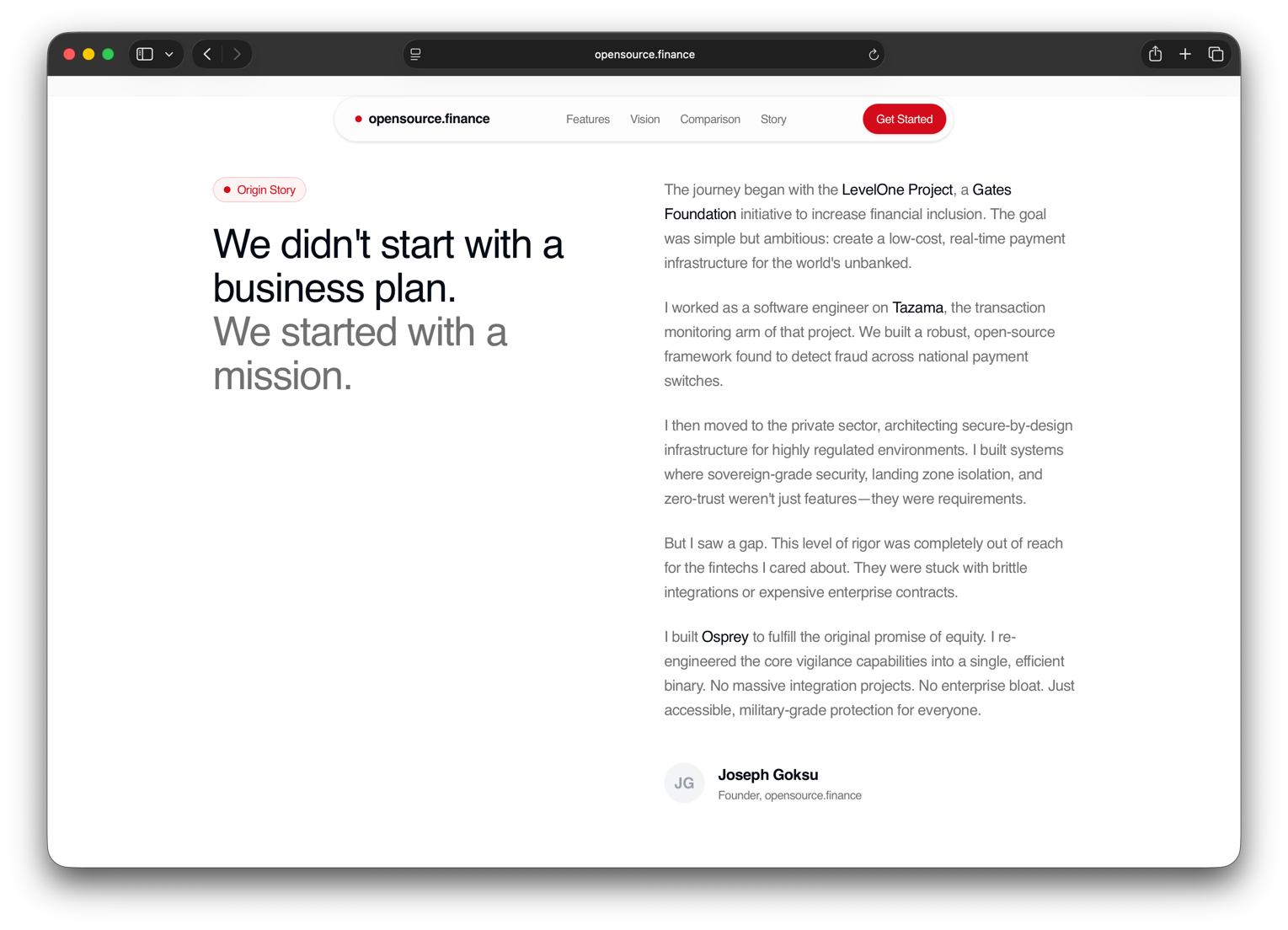

Yusuf Goksu. Platform engineer, Cambridge. Among the initial engineers on Tazama, funded by the Gates Foundation, now at the Linux Foundation. AWS Community Builder since 2022.

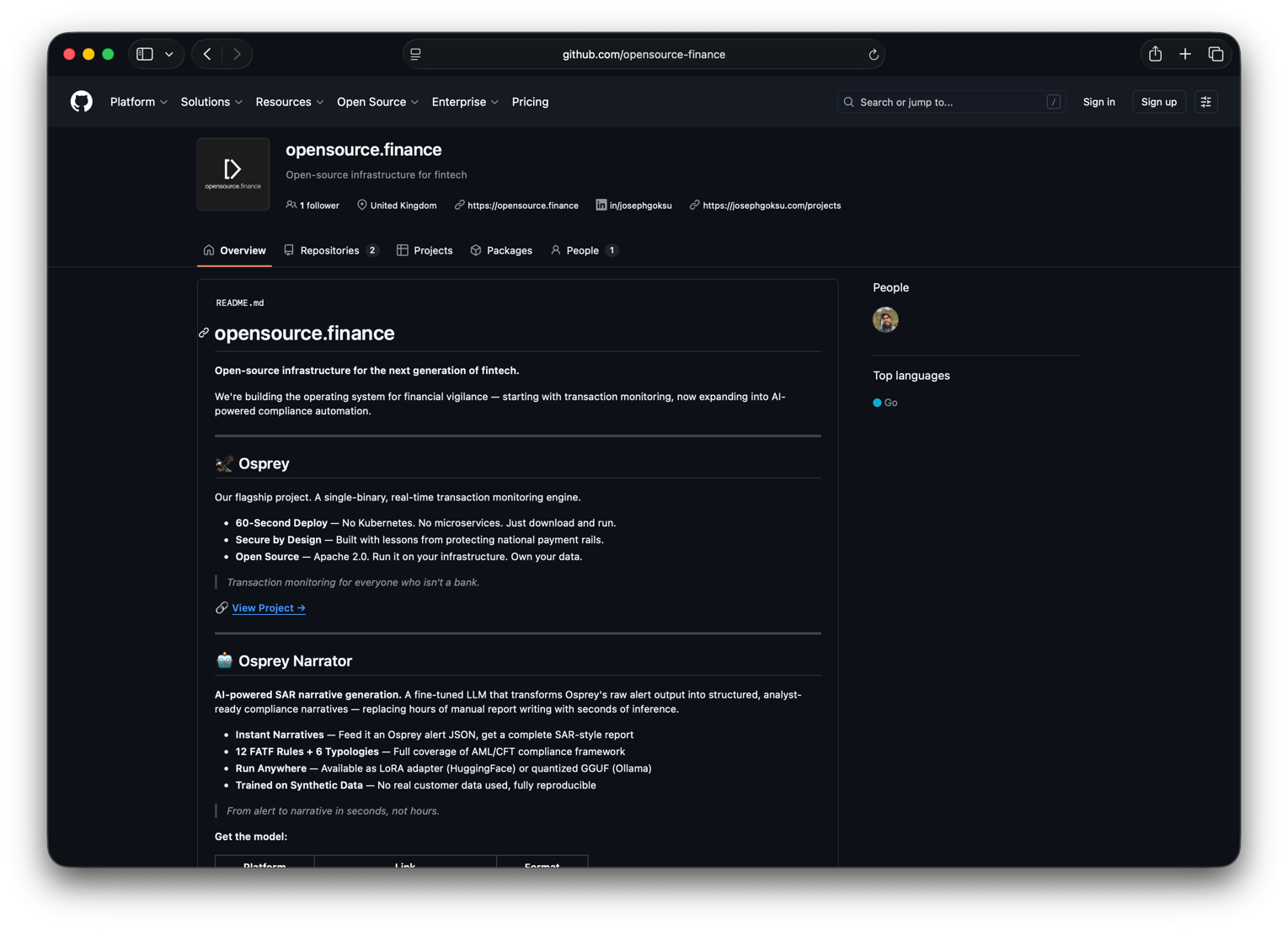

Osprey: open-source transaction monitoring. Go, single binary, 60-second deploy. Apache 2.0. The version I wish had existed.

# Seven transfers, 24 hours, one customer

tx_1042 09:14 TRY 198,500 -> acct_391

tx_1043 10:21 TRY 199,200 -> acct_412

tx_1044 11:08 TRY 197,800 -> acct_391

tx_1045 13:55 TRY 198,000 -> acct_507

tx_1046 17:02 TRY 199,500 -> acct_412

tx_1047 19:41 TRY 196,400 -> acct_507

tx_1048 22:18 TRY 195,800 -> acct_391

# Each one: under the TRY 200,000 declaration threshold.

# Together: structuring. A crime in itself.MASAK requires banks to record source and purpose for any transfer of TRY 200,000 or more (effective 1 Jan 2026). Splitting amounts to stay under this threshold is structuring -- prosecutable on its own, regardless of where the funds come from.

Deterministic rules. Same input, same output. Replayable.

Which rules fired, on which events, with what context.

Immutable record. Regulators will ask.

The model drafts. The analyst decides. Always.

Non-deterministic systems cannot own regulated decisions.

Suspicious activity reports are legal documents.

If a rule fired, the agent explains it. It does not dismiss it.

Evidence must point to data, not to model output.

Turn alert data into prose a human can read.

Pull transactions, profiles, graphs. Look up, do not invent.

Who, what, when, where, why, how. From facts, reviewed by a person.

"Source of funds: not in available data." That is a useful answer.

Bedrock Guardrails on every model call · CloudTrail on every state change · IAM per tenant

# CEL rule: structuring under the MASAK threshold

expression: |

amount >= 180000.0 &&

amount < 200000.0 &&

currency == "TRY"

# Same input, same output. Forever.

# Replayable. Auditable. Deterministic.# Bedrock action group: the entire tool surface

tools:

- get_alert(alert_id)

- get_transactions(customer_id, window)

- get_rule_explanation(rule_id)

- get_counterparty_graph(customer_id, depth)

- draft_narrative(alert_id, evidence)

# Five functions. That's the agent's whole world.

# A small tool surface is a safety feature.Frontier APIs send your data out, give different answers, and cost money per word. A small fine-tuned model is private, consistent, and cheap.

4-bit quantised. Decoder-only. Bedrock-supported architecture (Qwen3ForCausalLM).

16.5M trainable / 4.0B base (0.41%). ~70 min on a T4 GPU (g4dn.xlarge equivalent).

FATF typologies: structuring, layering, smurfing, PEP exposure, shell companies, geographic risk, more.

Trained on 3,000 synthetic samples. No real customer data. Ships on Ollama and HuggingFace.

ollama.com/josephgoksu/osprey-narrator · huggingface.co/josephgoksu/osprey-narrator-v0.1 · Apache 2.0

# LoRA config (Unsloth + HuggingFace TRL)

base_model = "Qwen/Qwen3-4B-Instruct-2507" # 4-bit quantised

lora_rank = 8

lora_alpha = 16

target_modules = ["q_proj", "k_proj", "v_proj", "o_proj",

"gate_proj", "up_proj", "down_proj"]

trainable_params = 16_500_000 # 0.41% of 4.0B base

max_seq_length = 4096

# Training run

dataset = 3_000 synthetic alert -> narrative pairs

epochs = 1

batch_size = 2 # effective 8 with grad accumulation

learning_rate = 2e-5

total_steps = 375

duration = ~70 min on a single NVIDIA T4 (16 GB)

final_test_loss = 0.976 # perplexity 2.65

# AWS path: same recipe runs on g4dn.xlarge (T4) or g5.xlarge (A10G)

# For Bedrock Custom Model Import: merge LoRA, export safetensors, S3.Unsloth gives ~2x training speedup over plain HF Transformers on the same GPU. Synthetic-only training: no real customer or transaction data was used.

Ollama when you control the machine. Bedrock CMI when you want AWS to manage it.

Runs on a laptop. Free. Starts instantly. Good for development, demos, on-prem.

Hosted by AWS. Scales to zero when idle. Pay only when used. IAM and audit built in.

No wifi. No frontier API. Just a small open model running on the laptop in my lap.

Different model from the Narrator (this one is general-purpose, 32B). Same setup: open weights, Ollama, no API.

Active alerts, transaction state, customer profiles. Hot reads, low latency.

Every event, every rule firing, every model call. Object Lock.

Who deployed which rule, when, with which IAM role.

The product is not the model. The product is the audit trail that lets a regulator believe you.

Guardrails evaluate input before the model sees it.

The model cannot claim guilt or invent unfired rules.

Account numbers, IDs, addresses. Once configured, always applied.

Only this alert, only this evidence. No legal or financial advice.

INPUT: TRY 198,500 transfer to acct_391

RULES FIRED: threshold_split, rapid_movement

DRAFT (analyst review required):

"Customer cust_77 made seven transfers in 24 hours,

each below the TRY 200,000 MASAK declaration threshold.

Three recurring counterparties. Total: TRY 1,385,200.

Source of funds: not in available data.

Next step: analyst review."source = "fintech.${tenant}"

Every invocation carries tenant_id.

Partition key starts with tenant_id.

Per-tenant prefix scoped by IAM.

Agent invocation includes tenant.

Different tenants, different rules.

A leaked alert across tenants is not a bug. It is a contract violation. Isolate at every layer.

Five things I added late. You should add them first.

I bolted orchestration on at v0.4. Start with the workflow on day one, even if it has one step.

Shipped on Ollama first. CMI gets you IAM, audit, and scale-to-zero from the first deploy.

Retrofitted as event-driven memory. Stream into Bedrock for context recall from day one.

Configure before the first NAT Gateway invoice arrives. Mine arrived first.

Designed in, not bolted on. Treat them as a hard requirement, not a launch-week task.

The agent's draft is not evidence. The data behind it is.

Same input, same result. Always.

Non-deterministic systems cannot own regulated decisions.

Every state transition logged. Forever.

Same shape works for your problem too. Swap three things and the architecture stays the same.

Google's Common Expression Language. Sandboxed, embeddable, language-agnostic. Write detection logic for any domain — Osprey runs them.

Small open model + LoRA + ~3K synthetic samples. Swap the prose: clinical notes, runbooks, refund letters, support replies.

EventBridge → Lambda → DynamoDB/S3 → Bedrock CMI → human review. Different events, same audit trail.

Caveat: regulated decisions still need a human in the loop. The pattern travels — the responsibility doesn't.

Go binary, Apache 2.0, CEL rules, 60-second deploy. Fork it, write your own rules.

Qwen3-4B + LoRA. Q4_K_M GGUF on Ollama, safetensors for Bedrock CMI.

Linux Foundation real-time fraud platform. Digital Public Good.

First evidence, then narrative. The model drafts. The analyst decides.

josephgoksu.com · opensource.finance · github.com/josephgoksu